Embeddings in chatbots: understanding content without seeing the data

What is an embedding?

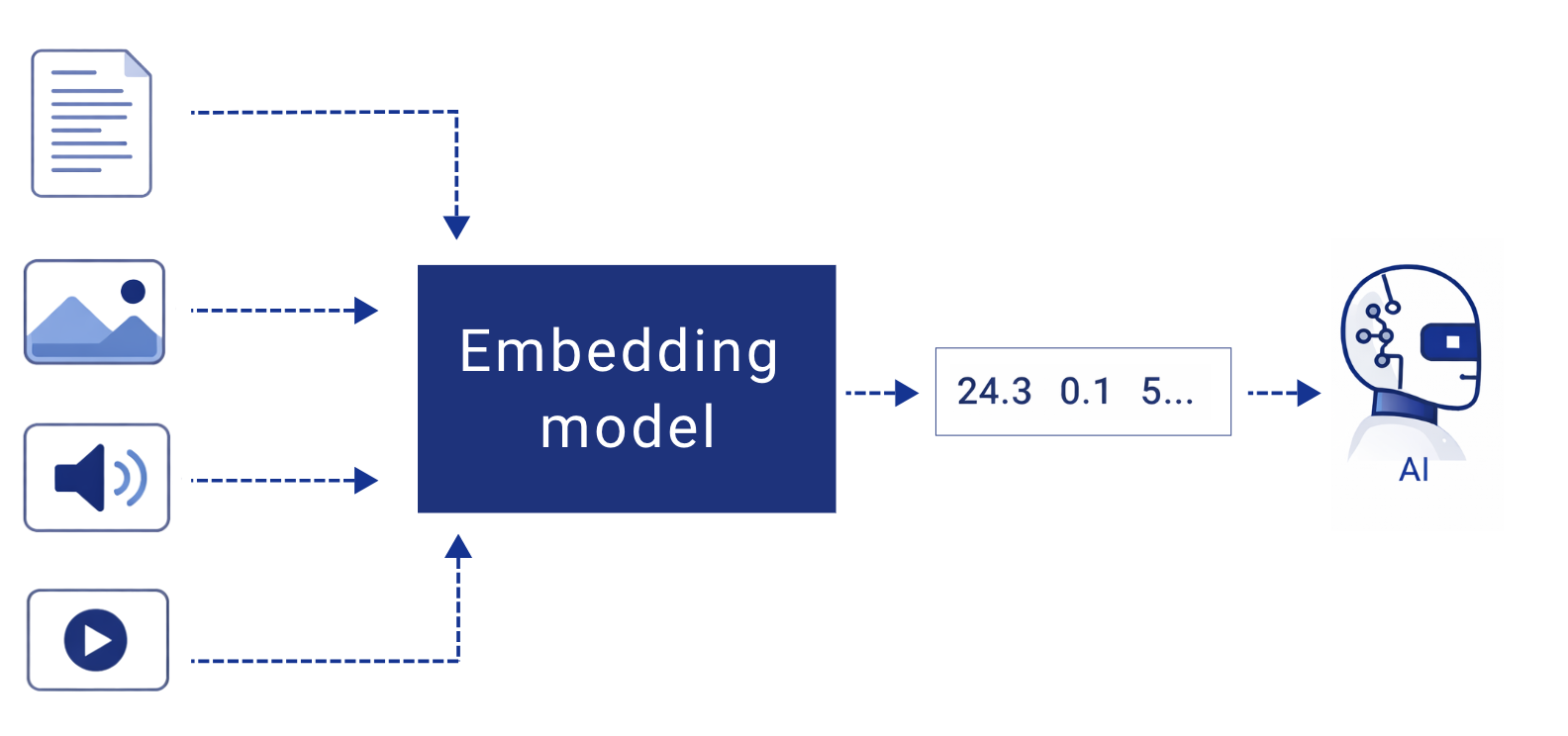

An embedding is a way of converting information (text, images, or audio) into numbers that represent its meaning. This allows Artificial Intelligence to work not with words or sentences, but with relationships between concepts.

Imagine having a confidential document.

Instead of sharing it as it is, this happens:

✗ The text is not shared

✗ The words are not shared

✓ Only a set of “coordinates” indicating what it is about is shared

➡ That is an embedding.

It is a translation of the meaning of the text into numbers.

Artificial Intelligence does not see the original content, only a mathematical version that allows it to understand:

• Whether two texts are about the same topic

• Which pieces of information are related

• Which fragment is most relevant to answer a question

It is like telling someone “this is about invoicing” without showing them the invoice.

What are embeddings used for?

Thanks to embeddings, systems can:

• Search information by meaning, not by exact words

• Compare documents and detect similarities

• Provide more accurate answers without processing full texts

This makes models more efficient and easier to control.

Embeddings and data protection

It is important to be clear: embeddings alone do not guarantee anonymity or absolute security. For this reason, at Cloud Levante embeddings are not used in isolation, but as one additional layer within a broader strategy of security, confidentiality, and data privacy.

Why are embeddings used in these systems?

1. Lower exposure of the original data

Sensitive text is not sent directly to the model.

It is first transformed into numbers that represent its meaning.

2. The content is not readable

Access to an embedding does not allow the original text to be easily read or reconstructed.

3. The model does not retain your data

In architectures based on Retrieval-Augmented Generation:

• Data remains within your systems

• The model only queries specific fragments in real time

• Data is not used to train or improve the model

4. Control and isolation

Embeddings can:

• Reside in controlled infrastructures

• Have restricted access

• Be deleted or refreshed when necessary

5. Reduced impact in case of incidents

Compared to sending full documents or training models with sensitive data, both the risk and the impact of a potential exposure are significantly reduced.

Embeddings do not replace a comprehensive security strategy.

However, when combined with other confidentiality, privacy, and data-control measures, they make it possible to analyse information with language models in a much safer way.

At Cloud Levante, embeddings are just one of the many layers used to protect data when designing and training systems that work with real, sensitive information.